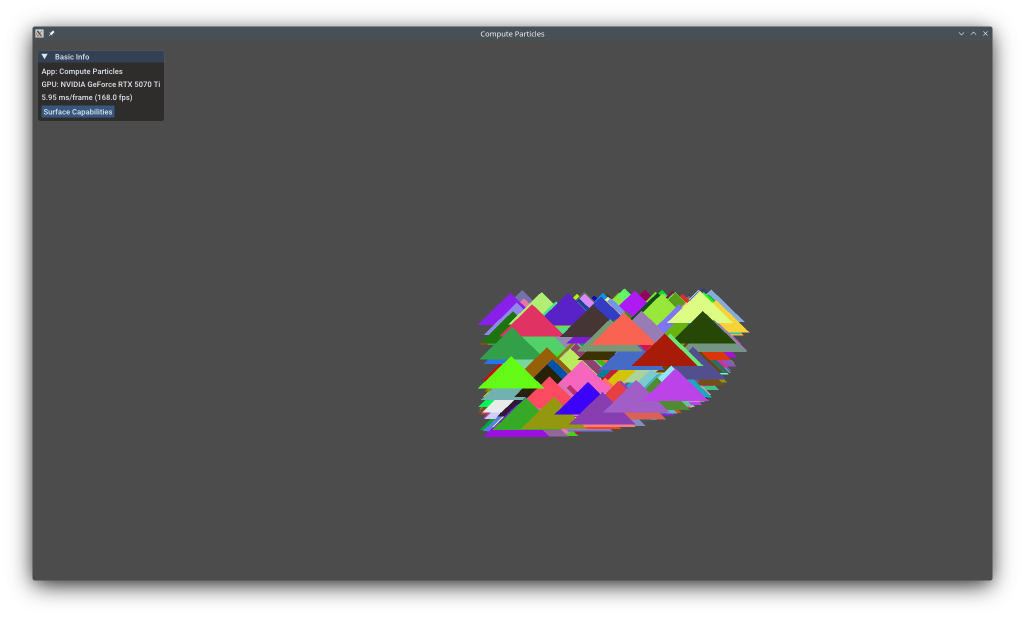

Compute Particles¶

This example shows how to leverage compute shaders to simulate thousands of particles entirely on the GPU, then render them using instanced draws. All particle updates (position, velocity, color) happen in parallel on the GPU - the CPU does no per-frame particle work. This is fundamental for GPU-driven rendering, physics simulations, and particle systems.

The example uses the KDGpuExample helper API for simplified setup.

Overview¶

What this example demonstrates:

- Compute shaders for data-parallel processing

- Storage buffers (SSBO) for large read-write GPU data

- Compute-to-graphics pipeline synchronization

- Instanced rendering with per-instance data

- GPU work groups and dispatch dimensions

Performance benefit:

- 1024 particles updated in parallel on GPU

- Zero CPU work per particle per frame

- Efficient instance rendering (3 vertices × 1024 instances)

- Typical: 100-1000× faster than CPU particle updates

Vulkan Requirements¶

- Vulkan Version: 1.0+

- Extensions: None (compute shaders are core)

- Device Features: Compute shader support (universal on modern GPUs)

- Limits: Max work group size, max compute shared memory

Key Concepts¶

Compute Shaders:

Compute shaders are general-purpose GPU programs that operate on arbitrary data, not just graphics. Unlike vertex/fragment shaders tied to the graphics pipeline, compute shaders process data in parallel work groups:

1 2 3 4 5 6 | |

Key concepts:

- Work Group: Batch of invocations (threads) executing together

- Local Size: Invocations per work group (e.g., 256)

- Dispatch: Number of work groups to execute (e.g., 1024/256 = 4)

- Global ID: Unique invocation index across all work groups

For 1024 particles with local_size_x=256:

- Dispatch 4 work groups

- Each work group processes 256 particles

- Total: 4 × 256 = 1024 invocations

Spec: https://registry.khronos.org/vulkan/specs/1.3-extensions/man/html/VkComputePipelineCreateInfo.html

Storage Buffers (SSBO):

Storage buffers are large, read-write GPU buffers accessible from shaders. Unlike uniform buffers (read-only, size-limited), SSBOs can be:

- Large: Megabytes to gigabytes (vs ~64KB UBO limit)

- Writable: Shaders can modify data

- Structured: Arrays of structs with arbitrary layouts

- Shared: Read by compute, written by compute, read by graphics

1 2 3 4 5 | |

Filename: compute_particles/compute_particles.cpp

This struct exists identically in both C++ and shader code, allowing seamless GPU-CPU data sharing.

Instanced Rendering:

After compute updates particle data, graphics pass renders one triangle per particle using instancing. Instancing draws the same geometry (3 vertices) multiple times with per-instance data (position, color):

- Base geometry: Triangle shape (3 vertices)

- Per-instance: Particle position/color (1024 instances)

- Result: 1024 triangles with one draw call

Spec: https://www.khronos.org/opengl/wiki/Vertex_Specification#Instanced_arrays

Implementation¶

Creating the Storage Buffer:

1 2 3 4 5 6 7 | |

Filename: compute_particles/compute_particles.cpp

Key flags:

StorageBufferBit: Accessible as SSBO in shadersVertexBufferBit: Also used as vertex buffer for instanced renderingCpuToGpu: CPU initializes data, GPU updates it

Vertex Buffer for Shared Triangle:

1 2 3 4 5 6 7 8 9 10 11 12 | |

Filename: compute_particles/compute_particles.cpp

This is the base triangle shape rendered for each particle. All particles share this geometry.

The shader:

- Reads particle data (position, velocity, color)

- Updates position based on velocity

- Bounces particles off screen boundaries

- Writes back to same buffer

All 1024 particles processed in parallel! See the compute shader source for implementation details.

All 1024 particles processed in parallel!

Loading Compute Shader:

1 2 | |

Filename: compute_particles/compute_particles.cpp

Compute shaders have .comp extension and run on compute queue (same as graphics on most hardware).

Compute Pipeline Setup:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 | |

Filename: compute_particles/compute_particles.cpp

Note: Compute pipelines are much simpler than graphics pipelines - just shader + layout!

The shader uses a specialization constant for work group size (256), allowing runtime configuration.

Graphics Pipeline with Instancing:

1 2 3 4 5 6 7 8 9 10 11 | |

Filename: compute_particles/compute_particles.cpp

Two vertex buffers:

- Binding 0: Triangle geometry (Vertex rate - advances per vertex)

- Binding 1: Particle data (Instance rate - advances per instance)

The inputRate = VertexRate::Instance tells the GPU to use one particle data entry per instance, not per vertex.

Dispatching Compute and Memory Barrier:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 | |

Filename: compute_particles/compute_particles.cpp

Critical steps:

- Dispatch compute: 1024 particles / 256 per work group = 4 work groups

- Memory barrier: Ensures compute writes complete before graphics reads

srcStages = ComputeShaderBit: Wait for compute to finishdstStages = VertexInputBit: Before vertex shader readssrcMask = ShaderWriteBit: Compute wrote to bufferdstMask = VertexAttributeReadBit: Graphics will read as vertex data

Without the barrier, graphics might read stale particle data (race condition)!

Spec: https://registry.khronos.org/vulkan/specs/1.3-extensions/man/html/VkMemoryBarrier.html

Instanced Draw:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | |

Filename: compute_particles/compute_particles.cpp

GPU draws 3 vertices × 1024 instances = 3072 vertices total, but data for only 3 vertices (+1024 particles) loaded.

Performance Notes¶

Compute Performance:

- Work Group Size: 256 is good for most GPUs (multiples of 32/64 for warp efficiency)

- Memory Access: Sequential access pattern (particle[i]) is cache-friendly

- ALU vs Memory: This shader is compute-bound (simple math), not memory-bound

- Occupancy: Small local size may limit GPU occupancy - try 512 or 1024

Graphics Performance:

- Instancing: 1024 instances in one draw vs 1024 draws = massive CPU savings

- Vertex Reuse: 3-vertex triangle reused 1024 times (good cache hit rate)

- Per-Instance Data: Reading from SSBO is fast on modern GPUs

- Overdraw: Particles may overlap - consider depth sorting or alpha blending

Scaling:

- Easy to scale to 100K+ particles by increasing particle count

- Ensure dispatch dimensions stay within limits (65535 work groups)

- For huge counts, consider GPU frustum culling and indirect draws

Memory Barriers:

- Barriers have small cost but are essential for correctness

- Could optimize with separate compute/graphics queues (advanced)

- Pipeline barriers are cheaper than full device waitIdle

See Also¶

- Dynamic Uniform Buffer - Dynamic uniform buffers for per-object data

- Order Independent Transparency (OIT) with Compute - Advanced compute shader usage

- Compute Shaders - Comprehensive guide

- Vulkan Compute Guide - Official Vulkan compute overview

- Dispatch Commands - Compute dispatch

- Memory Barriers - Synchronization primitives

Further Reading¶

- GPU Computing Gems - NVIDIA GPU computing techniques

- Work Group Size Optimization - Choosing optimal sizes

Updated on 2026-06-04 at 00:01:10 +0000